Every agent will need its own computer.

Satya Nadella said it plainly. Simple sentence. But it carries a lot of weight if you’ve ever tried to take an AI agent from a prototype to something that’s actually running in production, serving real users, handling real data.

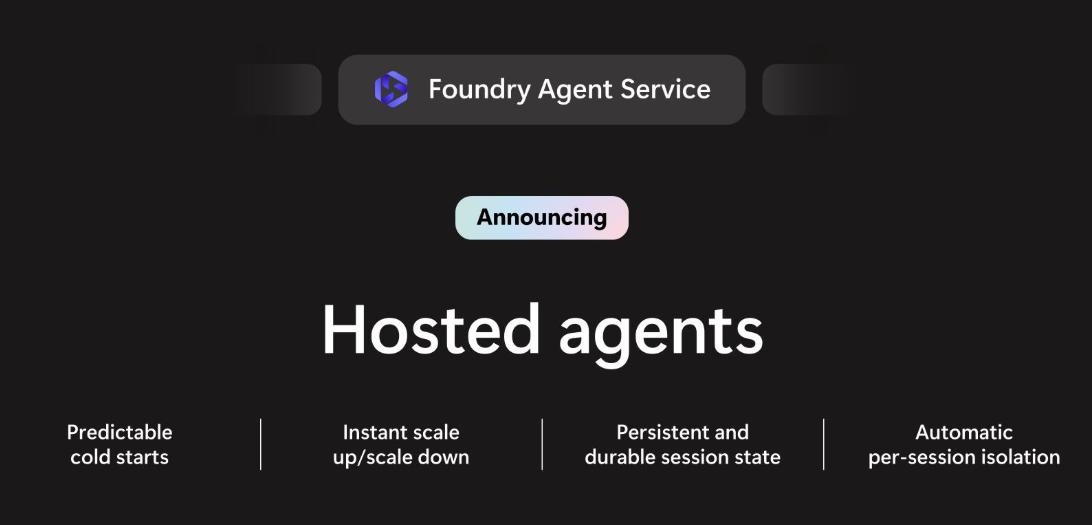

This week, Microsoft released Hosted Agents in Foundry Agent Service — now in public preview. Here’s what it actually is, why it matters, and where it becomes genuinely useful for organisations building serious AI solutions.

What’s the Problem We’re Solving Here?

If you’ve built AI agents before, you know the demo is the easy part.

Getting something to run locally? Straightforward. You spin up a LangGraph flow, wire up some tools, point it at GPT-4o or Claude, and it works. You show it to a stakeholder, they’re impressed, and then the real question comes: “How do we deploy this for 500 users?”

That’s where things get complicated.

Traditional cloud compute — containers, App Services, Azure Functions — was never designed for agents. These platforms were built for web APIs and services, where you have many users sharing the same running instance. That model works fine for a REST endpoint. It does not work for an AI agent that’s writing files, executing code, holding sensitive session context, and potentially accessing credentials.

When Customer A and Customer B are both running sessions on the same shared container, and each agent is writing to a filesystem and running code — that’s not a performance problem. That’s a security problem.

What Hosted Agents Actually Does

Hosted Agents gives every single agent session its own dedicated, VM-isolated sandbox with a persistent filesystem.

Not process isolation. Not containers sharing a host. Actual hypervisor-level isolation, at cloud scale. Each session is its own environment.

Agent sandboxes spin up in seconds. Not “seconds to minutes depending on your luck” — actually predictable, low-variance cold starts. This matters when you’re building user-facing products.

When a session goes idle, it scales down and you stop paying. When it resumes — your agent picks up exactly where it left off. The working directory, the files it wrote, the state it was building — all still there. This isn’t just cost efficiency. It’s what enables truly long-running agents.

You don’t have to build this yourself. Every session is isolated, and you can use isolation keys to namespace your users’ sessions cleanly.

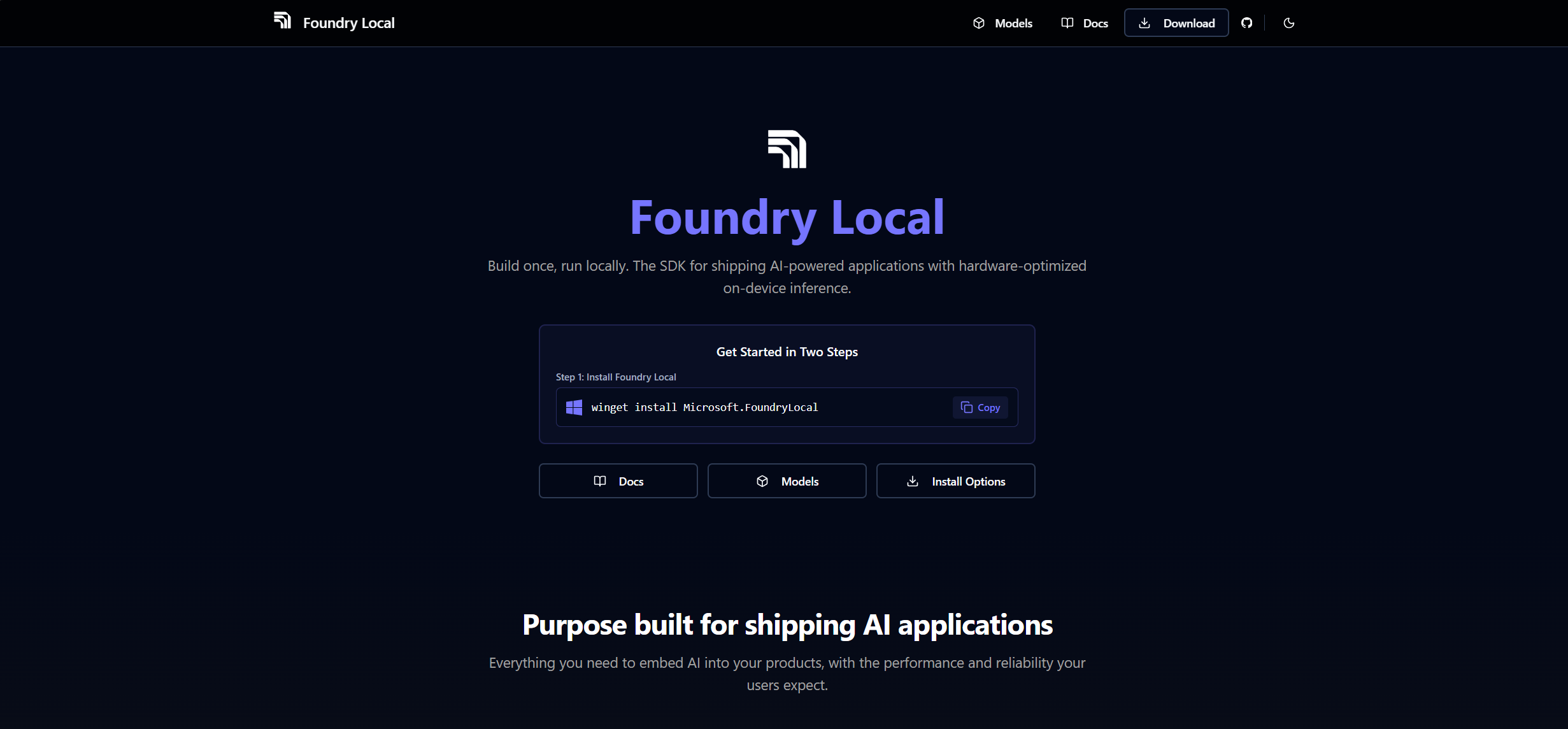

LangGraph, Microsoft Agent Framework, OpenAI Agents SDK, Claude Agent SDK — Foundry doesn’t care. Define your environment with a Dockerfile, deploy with azd deploy, and the platform handles the rest.

Where This Becomes Genuinely Useful

There’s a tendency to describe new platform features in vague terms like “enterprise-ready” and “production-grade” without getting specific about what that actually means in practice. Here’s where Hosted Agents becomes a real unlock.

Think about an agent that processes contracts, financial statements, or medical records. These aren’t quick API calls. The agent reads a document, writes intermediate analysis to a file, cross-references another document, builds a structured output. That entire workflow needs persistent state across multiple steps. Previously, you’d need to wire up external storage (Blob Storage, Cosmos DB) just to keep that context alive. With Hosted Agents, the filesystem persists natively within the session.

If you’re building an internal AI assistant for a company with 200 employees, you need every user’s session to be isolated. You can’t have user A’s agent context leaking into user B’s session. Hosted Agents gives you that at the infrastructure level — not something you have to engineer yourself.

Meeting prep agents, report generation agents, overnight data pipeline agents — the pattern where an agent runs for hours, accumulates context, writes intermediate files, and produces an output. The scale-to-zero with state preservation is what makes this economically viable. You’re not paying for an always-on compute instance just to handle bursts of overnight work.

Governance requirements, audit trails, per-user identity — these aren’t afterthoughts in Hosted Agents. Every agent gets its own Entra Agent ID. OBO (on-behalf-of) flows mean agents can act on behalf of specific users with a continuous audit trail. For healthcare, legal, or financial applications, this is the difference between something you can actually take to compliance and something that stays in the pilot phase forever.

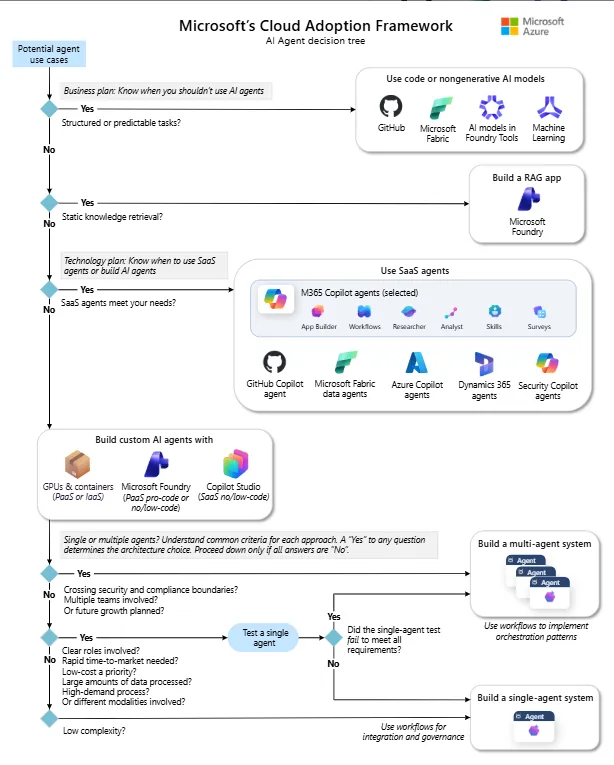

What This Means for the Microsoft AI Stack Specifically

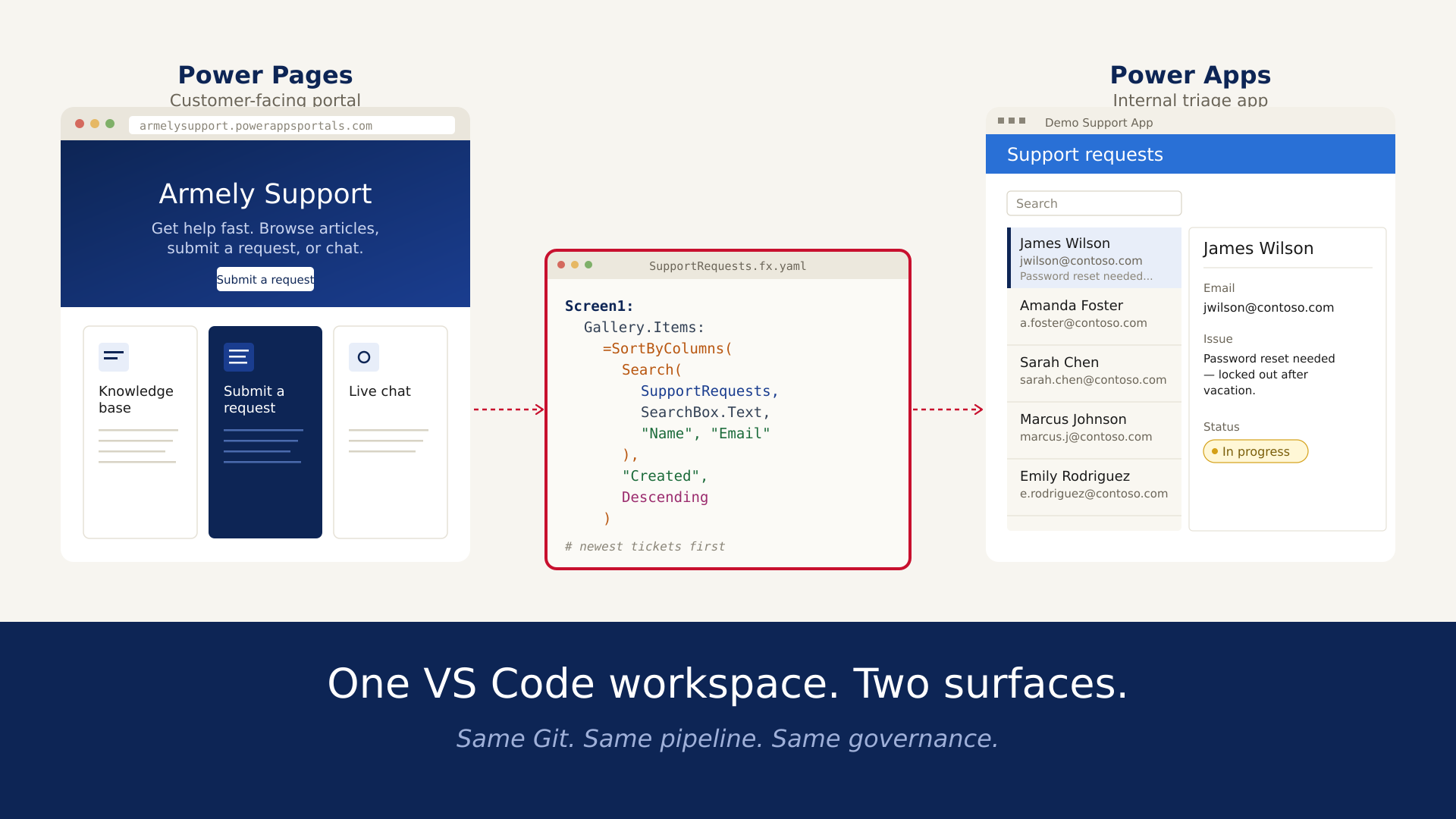

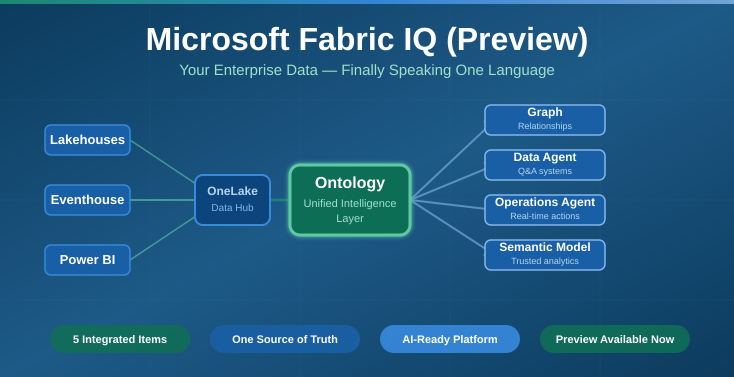

Hosted Agents isn’t a standalone product. It’s deeply wired into the broader Foundry platform, and that integration is where the enterprise value compounds.

Configure tools once, expose them via MCP, handle auth and OAuth passthrough, get observability on every tool call. This is significant for organisations managing multiple agents across teams — a single place to govern what every agent can and can’t do.

Managed long-term memory built into Foundry Agent Service. Agents remember context across sessions without you provisioning a separate database. If you’ve built this yourself before, you know how much plumbing that typically involves.

OpenTelemetry-based tracing, AI Red Teaming Agent for adversarial testing, continuous evaluation baked in. Not something you bolt on after the fact.

Publish your agent directly to Teams or M365 Copilot with one click. Your agent is where your users already are.

What I’d Watch Carefully

A few honest notes as this moves from preview to GA.

The BYO VNet support is critical for any enterprise with strict network policies. That’s available, which is good. The question is how smooth the configuration experience is for teams that aren’t deep on Azure networking.

Cold start behaviour under load — the announcement says predictable cold starts, and that’s a meaningful promise. I’d want to validate this at scale before committing it to a latency-sensitive user-facing workflow.

The framework flexibility is a genuine differentiator. Being able to bring LangGraph or any other orchestration framework and have it work within Hosted Agents’ sandboxing model is not something every platform offers. That’s worth noting if you’ve been locked into vendor-specific frameworks elsewhere.

The Bottom Line

Building agents has never been the hard part. The hard part is building agents that can safely, reliably, and economically serve real users in production — with the isolation, identity, governance, and observability that enterprises actually require.

Hosted Agents in Foundry is a serious answer to that problem. It doesn’t replace your thinking about agent design, tool selection, or model choice. But it removes a significant category of infrastructure concerns that currently eat up engineering time before any business value gets delivered.

If your organisation is exploring AI agents beyond the demo stage, this is worth paying close attention to.